-

Get Cloud GPU Server - Register Now!

Toggle navigation

Unless you live in a far-flung island, you must have come across the term ‘deep learning’ at least a couple of times while browsing the internet. Put simply, deep learning is a subset of machine learning that learns from examples, similar to what humans do.

Deep learning teaches a machine to process inputs through layers to predict and classify information. These inputs can be in the form of image, text or sound.

Similar to how humans learn from their own experience, deep learning models learn from large sets of data. They repeat the same task of classifying/predicting data over and over again in order to improve their accuracy in getting the desired results.

Any deep learning model learns using an artificial neural network.

Let us first try to understand what these artificial neural networks are.

Deep learning tries to mimic the human brain in filtering and processing information.

In the human brain, there are about 100 billion neurons. Each of these neurons connects to about 100,000 other neighbouring neurons. As a result, a complex network of neurons is created. See image below.

Figure 1: Human Brain has a complex network of over 100 billion neurons.

Deep learning tries to replicate this complex network, but in a way that works for machines. So, while in a human brain we have real brain cells, deep learning models have interconnected processing elements (neurons) that work together to solve a specific problem.

In its simplest form, a neuron is something that holds a number between 0 and 1.

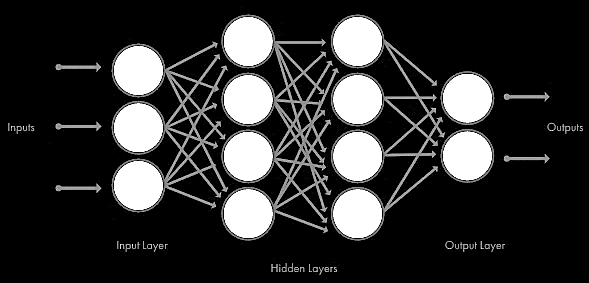

In a deep learning architecture, these neurons are sorted in three different kinds of layers:

Input Layer: This layer of neural network receives the data (in the form of text, image or sound) and then passes it on to the hidden layers for processing.

Hidden Layers(s): This is the second part of the neural network where all the mathematical computations take place. A neural network can have up to hundreds of hidden layers.

Output Layer: This layer gathers the computation done by the hidden layers and presents it in a way humans can understand.

The image below depicts the architecture of a deep learning model:

Figure 2: The image shows the architecture of a deep learning model.

So, by now, you would have probably understood why we call it deep learning: there are so many layers involved.

Let us now see how these neural networks learn.

In a neural network, we do not tell the program what we want. We give the network inputs and what we want for the output and allow it to learn on its own. We just build the architecture and let it learn on its own.

Once trained, the model gives you the desired output for a given input. Deep learning models are trained using huge sets of data, so they often learn much faster than humans.

In a neural network, each layer of neurons uses the output of the previous layer for its input. This layer attempts to transform this input data into something more composite.

Let us try to understand this by using a simple example.

Say your neural network is designed to recognise faces. The first layer of the neural network may analyse the brightness of the pixels. The second layer may identify any edges in the images. The third layer may recognise textures and shapes. The next one may identify features like nose and eyes and so on until the final layer gives the output.

Once the layers have processed the data, we can label the outputs and find out how accurate the network is. We can then use backpropagation to improve its accuracy. After some time, the network learns to recognise images on its own, without requiring any human intervention.

Now that you have an overall idea of how a neural network works, let us try to understand what happens inside a neuron.

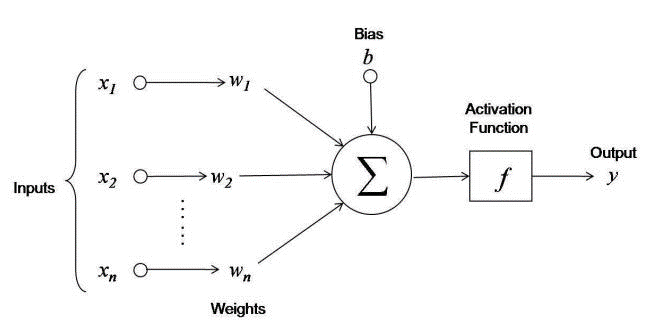

A neuron takes in information in numerical form. This information is represented in the form of an activation value.

Higher is this activation value, higher is the activation of the neuron. The activation value of a neuron lies between 0 and 1.

Now neurons in all the layers (except the first layer) receive inputs that are the outputs from neurons of the previous layer. The connection between any two neurons has a unique weight attached to it.

A neuron receiving inputs calculates the weighted sum of the activation values it receives. A bias value may be added to this weighted sum.

The neuron finally applies an activation function to the obtained value. The purpose of applying this activation function is to translate the output to a range like 0 to 1. The activation function helps the neuron understand if it needs to pass along a signal or not.

The activation passes through the entire neural network till it reaches the outermost neurons. Here we get the output in a form that we can understand. (text, image, sound).

Here is a diagram depicting the operations taking place in a single neuron:

Figure 3: An image depicting the operations taking place in a single neuron.

A neural network has a cost function that compares the actual output with the expected output. In other words, the cost function determines the accuracy of the network. The lower the cost function, the higher is the accuracy of your network.

Once the cost function has been evaluated, the information travels backwards along the network and the network tries to minimize the cost function by tweaking the weights. This process is called as backpropagation.

Because of the way deep learning models are structured, you can adjust all the weights simultaneously.

So, I hope this introduction to deep learning has given you a surface-level understanding of how deep learning works.

Let us now have a look at what deep learning can do for you. Below are some of the use cases of deep learning:

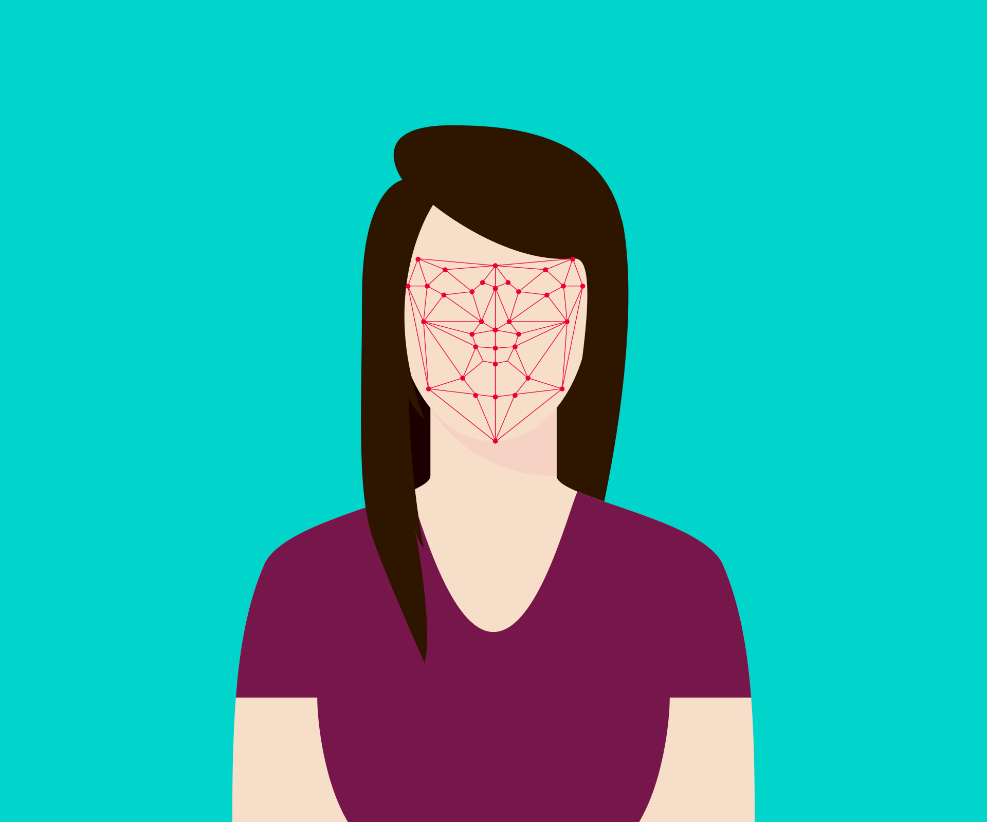

1) Image Recognition

Figure 4: Deep learning models are being widely used for facial recognition.

Deep learning algorithms can identify random objects within larger images. This property is being used in engineering applications to identify shapes for modelling. It is also used for tagging users on social networks. Deep Face used by Facebook is a classic example in this regard.

2) Speech Recognition

Figure 5: Virtual assistants like Alexa are successful applications of deep learning in voice recognition.

It is one of the most widely used applications of deep learning. Alexa, Siri, Google Home and Cortana are all based on deep learning models.

3) Text Analysis

Figure 6: Deep learning algorithms find extensive usage in text analysis.

One of the significant applications of text analysis is Natural Language Processing (NLP). In NLP, a large chunk of text is broken down so as to make the machine handle it. It is then analysed for intent, sentiment or relevance to a specific topic.

4) Drug Discovery

Figure 7: Atomwise has been using neural networks to facilitate drug discovery.

Atomwise, a start-up incepted in 2012, is capitalizing on deep learning to shorten the process of drug discovery. It’s software AtomNet uses neural networks to study molecules and predict how they might act in the human body, including their efficacy, toxicity and side-effects. This reduces the time involved in synthesizing and testing new compounds. Atomwise currently screens more than 10 million compounds every single day.

5) Cyber Security

Figure 8: Deep learning can reduce the risks and expenses involved in threat detection.

Deep learning can reduce the risks and expenses related to threat detection in cybersecurity. Many deep learning models can have as much as 99% detection rate. Sophisticated neural networks have been built for intrusion detection, malware as well as malicious code detection.

Here is a table depicting the use cases for deep learning:

|

Use Case

|

Industry |

|

Image |

|

|

Facial Recognition |

Social Media |

|

Face Unlock |

Smartphones |

|

Machine Vision |

Automotive |

|

|

|

|

Text |

|

|

Sentiment Analysis |

CRM, Social Media |

|

Fraud Detection |

Finance |

|

Email Filtering |

Digital Marketing |

|

|

|

|

Sound |

|

|

Voice Search |

Smartphones |

|

Voice Recognition |

Internet of Things |

|

Engine Noise Detection |

Automotive |

|

|

|

|

Video |

|

|

Motion Detection |

Gaming, UI-UX |

|

Threat Detection |

Airports |

|

|

|

|

Time Series |

|

|

Log Analysis |

Finance, Data Centres |

|

Recommendation Engine |

E-commerce |

|

Enterprise Resource Planning |

Manufacturing, Supply Chain |

|

Business Analytics |

Finance, Economics |

|

Predictive Analytics from Sensor Data |

Manufacturing, Internet of Things |

Source: Pathmind.com

So, that’s all on deep learning. If you want to dig deeper into neural networks and deep learning, check out the following resources:

The Complete Beginner’s Guide to Deep Learning: Artificial Neural Networks

A Beginner’s Guide to Neural Networks and Deep Learning

I found these resources immensely helpful, hope they will help you too.

Thanks for reading!